Artificial Intelligence, especially its agentic AI implementation, is becoming a mainstay of business – and is attracting new and different attacks that must be defended.

aiFWall Inc emerged from stealth on January 21, 2026. It’s not yet the full launch of the company, but CEO and founder Vimal Vaidya has decided to make the basic product available for free. That product, aiFWall, is a firewall protection for AI deployments built to use AI to improve its own performance. A fundamental feature of the product is that it is two-way – it filters inputs to the AI that might harm it, and it filters outputs from the AI that may contain toxicity or bias.

Products to secure AI already exist, but according to Vaidya they tend to have fundamental weaknesses. One is they are not contextual. While in-house AI deployments are very much contextual, most security solutions are not – they only look at the current real time data. Such systems may examine a new user input and decide on the spot whether it is valid or invalid and allow or block it on that evidence. But what may appear invalid now could be valid in the context of a user interaction six months ago. “For that, you need to understand how the user has behaved earlier to understand the current intent,” says Vaidya.

A second, he asserts, is a failure to deliver just-in-time protection as it happens. To counter this, aiFWall (with the customer’s and users’ consent) collects the prompts, including malicious prompts, and feeds them into its own central AI engine. This produces ‘threat markers’ which are then distributed and immediately made available to every deployed aiFWall. It should be said that this interaction with the central intelligence is not part of the free version but is part of a paid subscription to the full version that will be available after the official launch of the company and product.

A third is that this AI firewall is self-learning on AI viruses. It operates like mainstream network firewalls in reporting a new virus back to the developer, who then distributes recognition to all other installations. aiFWall does this for the growing incidence of viruses specifically designed to attack corporate AI installations.

Just as the in-house use of agentic AI systems is new, so are viruses specifically designed to target it also new. But they already exist and the number is growing. PromptLock was the first, discovered in August 2025. In November, Google described its discovery of several others that harness the target’s own agentic AI systems. Many were experimental, but some were also seen in the wild.

Also in November, Anthropic published details of a sophisticated attack it first discovered in September. “The threat actor – whom we assess with high confidence was a Chinese state-sponsored group – manipulated our Claude Code tool into attempting infiltration into roughly thirty global targets and succeeded in a small number of cases.”

Most of these viruses are early attempts from attackers – they are used as learning tools. But there is little doubt that they will increase in quantity and quality.

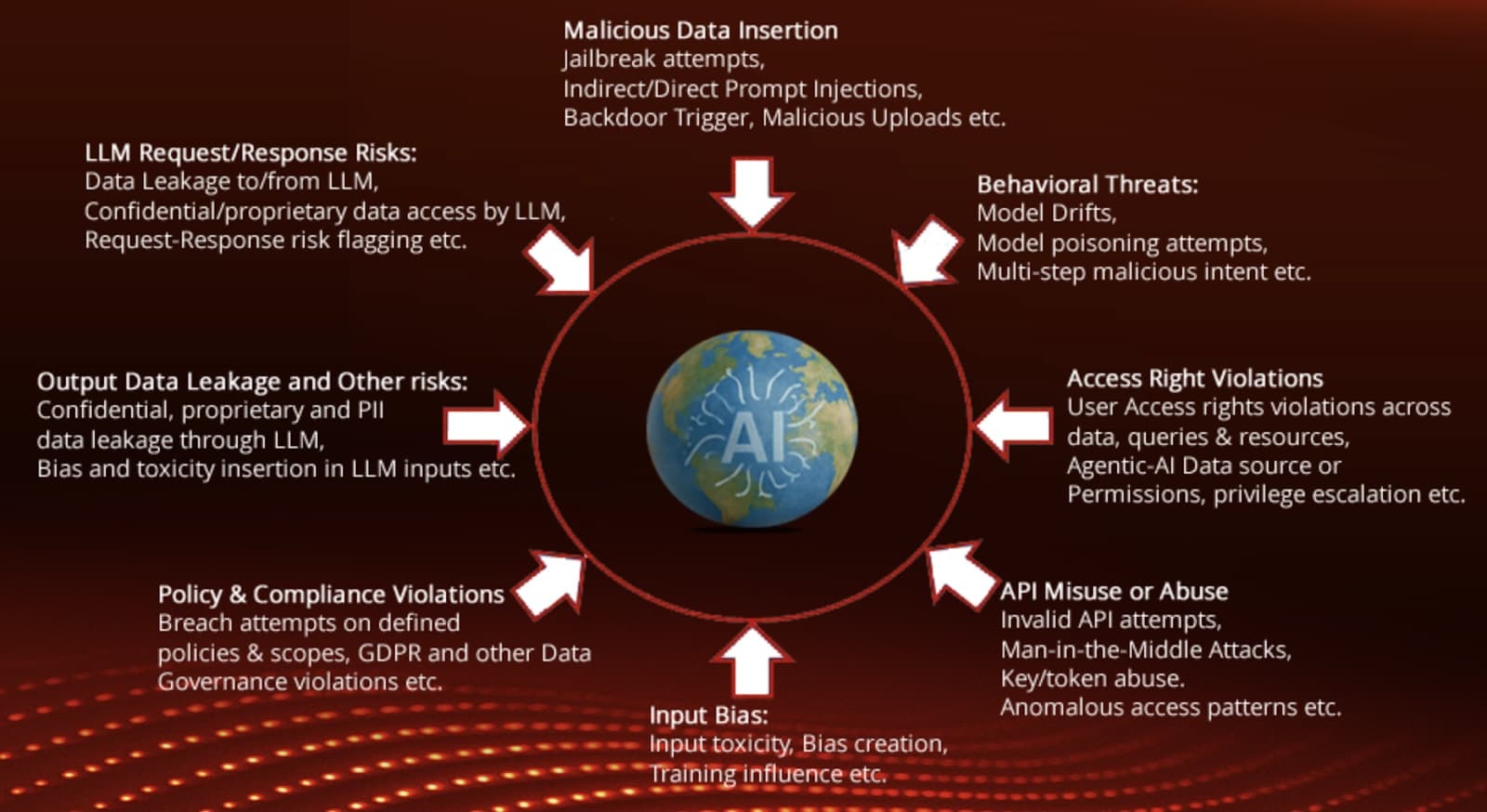

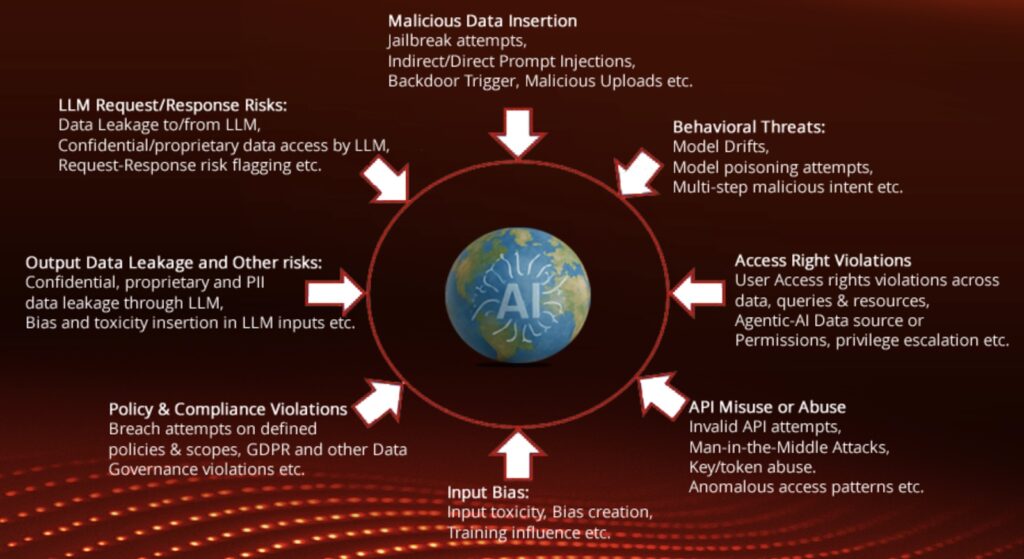

The big difference between aiFWall and mainstream firewalls is that this is a two way circular defense around the AI. It stops inbound attacks (such as malicious data poisoning prompts, viral attacks, attacks against the LLM API, denial of service attacks), and filters any outbound toxicity, bias or compliance issues (exposures contravening, for example, GDPR, CCPA, HIPAA, and PCI) in the AI’s responses.

It doesn’t replace the network firewall but protects agentic AI from threats within the network firewall. Stolen credentials have long been a primary attack tool. If the attacker has correct credentials, that attacker can generally penetrate the network firewall, but will still be stopped from gaining access to the agentic system by the aiFWall.

“Stolen credentials,” explains Vaidya, “could beat a network firewall and do bad things, since the user is validated by the credentials; but would likely fail at the AI firewall since the system has learned what the real user is likely to do, and learned what bad things look like.”

Vaidya doesn’t claim that aiFWall will prevent all attacks everywhere, every time. “No vendor would say that they’re 100% able to protect against any bad code. But we can probably do a better job than many of the solutions that are currently out there, because of the depth of the analysis that we provide, the context, and the tailor-making of markers to the current threat and the current deployment of AI. So, we feel that we’re ahead of the game. But again, there is no 100% protection for bad code, as everyone already knows.”

Learn More at the AI Risk Summit at Half Moon Bay

Related: Rethinking Security for Agentic AI

Related: Google Fortifies Chrome Agentic AI Against Indirect Prompt Injection Attacks

Related: Microsoft Highlights Security Risks Introduced by Agentic AI Feature

Related: Follow Pragmatic Interventions to Keep Agentic AI in Check