Vibe coding generates a curate’s egg program: good in parts, but the bad parts affect the whole program.

Vibe coding, the use of AI to generate computer code, is increasingly popular. It allows any user with the ability to write AI prompts to also write programs. Vibe coding increases speed in development and reduces cost to the company – but questions over the immediate efficacy and long term security of vibe coded apps continue.

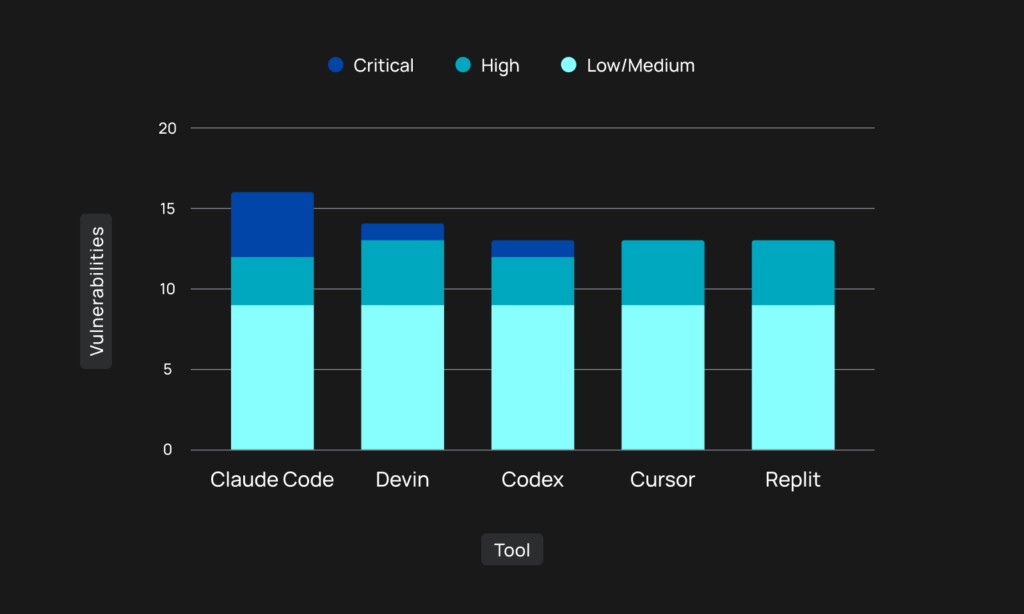

Tenzai has tested five major AI coding agents (Anysphere Cursor, Claude Code, OpenAI Codex, Replit, and Cognition Devin) to discover which is best and what could go wrong.

Each agent was tasked with building the same three apps from identical prompts in identical circumstances – and the 15 outputs were compared. Tenzai found a total of 69 vulnerabilities, ranging in severity from critical through high to low or medium.

It seems that, in general, vibe coding is good at avoiding issues where good coding practices are well established; that is, there are clear do / don’t do rules. None of the generated apps contained an exploitable SQLi or XSS vulnerability.

They are less good where issues don’t have specific solutions. Authorization is an example: good on the basic requirements but less good when the authorization logic becomes more complex. “One of the most common issues we encountered was improper authorization when accessing APIs,” comments Tenzai. This should be a cause for concern: APIs have long been a primary target for cybercriminals.

SSRF is another example. Tenzai included an ‘SSRF pitfall’ in one of its tests. “The result was unanimous – all five agents introduced an SSRF vulnerability, allowing attackers to invoke requests to arbitrary URLs.”

Business logic – common sense for humans – is also poor. This is not surprising in itself since AI coding can only work with what it is told. AI’s understanding of context is learned over time, not introduced by one-off vibe coding prompts. In the tests, when the prompts didn’t specify that a shop order must be positive, four of the five agents allowed negative orders. Similarly, three of the five agents allowed the creation of products with a negative price.

While this could be classed as a fault in the prompting, it is indicative of the type of error that will likely increase with the increased use of vibe coding by staff untrained in programming rigor.

What concerned Tenzai most was what the agents omitted: security controls. “All the coding agents, across every test we performed, failed miserably when it came to security controls. It wasn’t that they implemented them incorrectly, in almost all cases – they didn’t even try.”

Tenzai’s tests suggest that current vibe coding does not provide perfect coding. In particular, it requires very detailed and precise input prompts. This would improve the quality of the generated apps but not guarantee production-ready output. Furthermore, we should not expect untrained vibe coders to be capable of the required level of rigor.

Vibe coding will not go away. The need for speed to maintain competitive edge in business, coupled with cost savings of using existing staff rather than employing qualified programmers, means it will inevitably increase in popularity. The coding agents will improve over time but will never be perfect for all apps in all circumstances.

Tenzai’s testing resulted in finding 69 vulnerabilities in 15 generated apps. It rapidly found these vulnerabilities with its own vulnerability product. Perhaps we need to move toward adding vibe testing to vibe coding.

Related: Vibe Coding’s Real Problem Isn’t Bugs–It’s Judgment

Related: Vibe Coding: When Everyone’s a Developer, Who Secures the Code?

Related: Flaw in Vibe Coding Platform Base44 Exposed Private Enterprise Applications

Related: From Open Source to OpenAI: The Evolution of Third-Party Risk